A technology company turned down a government contract and got labeled a national security threat for it.

That's the short version of what happened between Anthropic and the Pentagon in early 2026. The longer version involves a $200 million military contract, a refusal to allow AI to make kill decisions without human oversight, a Trump administration retaliation order, and a federal judge who said Anthropic was likely to win. It also involves OpenAI quietly taking the contracts Anthropic refused.

If you're a Christian, this story is worth your attention. Not because it's a political controversy (it is), but because the questions underneath it are theological ones: Who bears moral responsibility when a machine takes a life? What does faithfulness look like when principle collides with profit? And what does it mean to serve two masters when one of them is the Department of Defense?

What Actually Happened

In July 2025, the Department of Defense awarded Anthropic a $200 million contract, making Claude the first frontier AI model cleared for classified military use. It was a significant milestone. Then the trouble started.

Defense Secretary Pete Hegseth pushed Anthropic to allow the government to use its technology for "any lawful use," including fully autonomous weapons systems and mass surveillance of American citizens. Anthropic CEO Dario Amodei refused. He said the technology is not yet sufficiently reliable or safe for those kinds of uses, and that Anthropic could not "in good conscience" allow Claude to be deployed for autonomous lethal decisions or sweeping domestic surveillance.

President Trump responded by ordering the U.S. government to stop using Anthropic's products entirely. The Pentagon designated Anthropic a "supply chain risk," effectively labeling a company that had just received a major military contract as a national security threat, because it declined to remove its own ethical constraints.

On March 26, 2026, federal Judge Rita Lin of the Northern District of California temporarily blocked the Pentagon's designation. She ruled that Anthropic has a high likelihood of ultimately winning its case and that the government's actions raised serious First and Fifth Amendment concerns. OpenAI, which had no such qualms about autonomous weapons use, stepped in to fill the gap.

That's the factual record. Now let's talk about what it means.

Killing Without a Conscience in the Loop

Autonomous lethal weapons systems are not science fiction. They exist, they are being developed, and the question of whether to allow AI to make the final targeting decision without a human in the loop is a live policy debate right now.

Here is why this matters theologically. Romans 13:4 describes government authority this way:

"For the one in authority is God's servant for your good. But if you do wrong, be afraid, for rulers do not bear the sword for no reason. They are God's servants, agents of wrath to bring punishment on the wrongdoer." - Romans 13:4

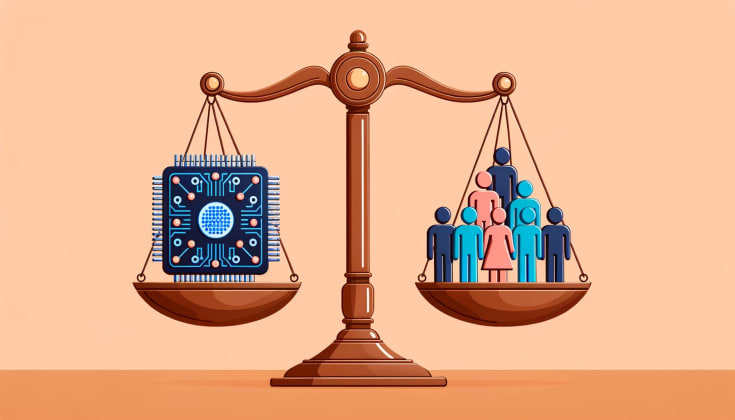

Notice the language. Government officials "bear the sword." The responsibility is personal. The moral weight falls on a human being who must answer for how that authority is used. That is the biblical picture of legitimate governance: power exercised by accountable persons who can be held responsible before God and before community.

When you delegate lethal decision-making to a machine, you are attempting to sever that accountability. A soldier who pulls a trigger can be prosecuted for a war crime. A general who orders an unlawful strike faces consequences. An algorithm that identifies a target and executes a strike without human authorization can be blamed on no one, which means it is accountable to no one.

Christians who take Romans 13 seriously should find that concerning. The authority to take life carries moral weight precisely because a human being must own it. Offloading that decision to a system that has no conscience, no remorse, and no capacity to stand before God is not just a technical problem. It is a theological one.

The Surveillance Question

Anthropic also refused to support mass surveillance of American citizens. This refusal is easy to dismiss as corporate positioning, but the reasoning holds up under scrutiny.

Proverbs tells us something about the relationship between power and corruption:

"Like a roaring lion or a charging bear is a wicked ruler over a helpless people." - Proverbs 28:15

History is not subtle on this point. Governments with access to comprehensive surveillance capabilities will eventually use those capabilities to suppress dissent, monitor political opponents, and consolidate power. The original intentions may be defensive. The capability itself is corrosive. The East German Stasi. The Soviet internal monitoring apparatus. China's social credit system. Mass surveillance technology is not neutral, and the claim that any particular government would exercise it only wisely and only for legitimate security purposes has no historical support.

The wise see where a road leads before they walk down it.

"The prudent see danger and take refuge, but the simple keep going and pay the penalty." - Proverbs 22:3

Anthropic's refusal was not naive idealism. It was an accurate assessment of what happens when powerful surveillance tools are handed over without constraints.

When a Competitor Takes the Contracts You Refused

Here is the uncomfortable part. Anthropic's refusal did not eliminate the problem. OpenAI stepped in. The Pentagon is proceeding with AI military contracts. The weapons development continues. The surveillance infrastructure is being built. Anthropic's principled stand did not stop any of it.

So why does it matter?

Because complicity is real. Because what you participate in shapes what you are. Because there is a difference between a person who refuses to make a weapon and a person who manufactures one for hire.

Isaiah captures this with clarity:

"So do not fear, for I am with you; do not be dismayed, for I am your God. I will strengthen you and help you; I will uphold you with my righteous right hand." - Isaiah 41:10

The verse is often quoted for personal comfort, but the context is important. God is calling his people to faithfulness when the cost is real. The fact that an enemy will do what you refuse to do does not make refusal pointless. It makes it harder, and it makes it more necessary, because someone has to demonstrate that conscience exists as a category.

Paul writing to the Corinthians pressed the same point:

"I have the right to do anything, but not everything is beneficial. I have the right to do anything, but not everything is constructive." - 1 Corinthians 6:12

Legality is not the standard. The Pentagon contract was legal. Autonomous weapons development is legal. Mass surveillance under national security authority is, apparently, legal. That tells you exactly nothing about whether any of it is good.

Your weekly faith & AI brief.

Scripture, reflection, and the AI news that matters for Christians. Free, every week.

Read this week’s issueWhat a Corporate Stand Actually Witnesses To

Anthropic did not just lose a contract. The company took a reputational and financial hit in the short term that most companies would not voluntarily absorb. And something unexpected happened in response: more than a million people per day signed up for Claude in the weeks after the dispute became public, pushing it past ChatGPT and Gemini as the top AI app in more than twenty countries.

People notice when an organization behaves with integrity. They remember it. They reward it.

This is not a prosperity gospel argument. Doing the right thing does not guarantee financial success, and Anthropic's numbers could reverse next quarter. But the response points to something real: there is a hunger for organizations that mean what they say about ethics, that refuse when refusal costs them, that hold a line when powerful institutions are pushing them to cross it.

Matthew 6:24 is unambiguous about what the choice looks like:

"No one can serve two masters. Either you will hate the one and love the other, or you will be devoted to the one and despise the other. You cannot serve both God and money." - Matthew 6:24

Anthropic chose one master in this moment. Whether it holds that choice under continued pressure is a question worth watching. But the choice itself was a witness, and the response from the public suggests the witness landed.

What This Looks Like in Your Own Life

You are probably not a defense contractor or an AI company founder. But the structure of this situation is one you will encounter.

You will be asked to participate in something that conflicts with your conscience. The participation will be legal. The financial or professional cost of refusal will be real. Someone else will do it if you don't. Your refusal will not stop the thing from happening.

And the question will still be: what do you do?

"Rescue those being led away to death; hold back those stumbling toward slaughter. If you say, 'But we did not know about this,' does not he who weighs the heart perceive it?" - Proverbs 24:11-12

The claim of ignorance does not work as a moral exit. Knowing that a thing causes harm and declining to engage with that fact is not a neutral position before God. Dario Amodei knew what Anthropic's technology could do in the wrong application. He declined to pretend otherwise to keep a government contract. That is the shape of conscience in a high-pressure situation.

Where in your work, your investments, your daily decisions, are you being asked to look away from what you know to be true? Where are you holding the line, and where have you let it slip because the cost of holding it was too high?

Obedience to God Over Obedience to Institutions

The apostles faced a version of this question with no ambiguity at all. In Acts 4, the religious and political authorities ordered them to stop speaking about Jesus. Their response has not been improved on:

"Which is right in God's eyes: to listen to you, or to him? You be the judges! As for us, we cannot help speaking about what we have seen and heard." - Acts 4:19-20

There are moments when faithful witness requires saying no to powerful institutions. The apostles did it. People have done it throughout church history at great cost. Anthropic, in a much smaller and more ordinary way, did it in a business dispute. The principle is the same: there are things that are not negotiable, and holding them costs something real.

This does not mean every corporate decision is analogous to apostolic witness. It means the category of "sometimes you have to say no to the powerful thing" is not obsolete. It applies in boardrooms as well as in prison cells.

Practical Application: Three Questions to Sit With

1. What ethical lines have you drawn in your own work? Not theoretical ones. Real ones. Specific things you will not do regardless of what it costs professionally. If you cannot name any, that is worth examining.

2. Who benefits from your yes? When you agree to participate in something, trace the downstream effects. Who ends up holding the weapon, bearing the surveillance, experiencing the consequence? The person who designs the tool and the person who pulls the trigger are not morally equivalent, but they are not entirely separate either.

3. What would it cost you to say no once? Anthropic said no to the U.S. government and took a significant short-term financial hit. The company is still standing and now has a stronger reputation for integrity than its competitors. The cost of integrity is usually survivable. The cost of abandoning it accumulates quietly.

Sources

- Deadline looms as Anthropic rejects Pentagon demands it remove AI safeguards - NPR

- OpenAI announces Pentagon deal after Trump bans Anthropic - NPR

- Pentagon labels AI company Anthropic a supply chain risk - NPR

- Judge Blocks Pentagon Move Against Anthropic in AI Ethics Dispute - National Catholic Register

- Anthropic's case against the Pentagon could open space for AI regulation - Al Jazeera

- When AI Ethics Collide with National Security: Anthropic Challenges Pentagon Blacklisting - Syracuse Law Review

Frequently Asked Questions

Isn't Anthropic just protecting its reputation, not its ethics?

Possibly both. Reputation and ethics are not always in conflict, and the fact that integrity can also be good for business does not make the integrity fake. What is notable here is that Anthropic refused the Pentagon's demands before the public backlash, not after it. The company gave up a $200 million contract and absorbed a "supply chain risk" designation from the U.S. government. That is a real cost. The reputation benefit came later and was not guaranteed. Cynicism about motive does not change the fact that the refusal happened and the alternative would have been worse.

Should Christians care about AI weapons policy?

Christians should care about any question that involves accountability for life and death, protection of the vulnerable, and the exercise of power without conscience. Autonomous weapons and mass surveillance are not niche technical issues. They are questions about who is responsible when innocent people are killed by algorithms and who watches the citizens when the government has unlimited capacity to watch everyone. Both questions are theological before they are political.

What about national security? Don't we need AI for defense?

Yes, and that is not what Anthropic refused. The company had an active military contract for classified use. What it refused was the specific application of autonomous lethal targeting and mass domestic surveillance. You can support defense applications of AI and still believe that a human being must authorize the decision to take a life. Those are not in conflict. The question is not whether AI has a role in national defense. The question is whether any decision that ends a human life should be made without a human being who is accountable for it.